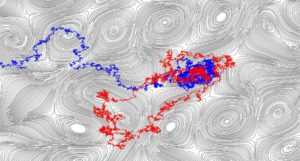

We have just uploaded our paper, joint with Ahmed Bou-Rabee and Tuomo Kuusi, titled “Superdiffusive central limit theorem for a Brownian particle in a critically-correlated incompressible random drift,” to the arXiv. The results in this paper were recently announced by Ahmed in several public talks, including this one at the Fields Institute.

The paper is about the long-time behavior of a Brownian particle advected by a random, incompressible vector field. We consider the solution ![]() of the stochastic differential equation

of the stochastic differential equation

(1) ![]()

where ![]() is a small parameter called the molecular diffusivity and

is a small parameter called the molecular diffusivity and ![]() is a stationary random vector field assumed to be divergence-free, isotropic in law, and have “critical” correlations. The model example covered by our assumptions is the case in two dimensions in which

is a stationary random vector field assumed to be divergence-free, isotropic in law, and have “critical” correlations. The model example covered by our assumptions is the case in two dimensions in which ![]() , where

, where ![]() is a Gaussian free field.

is a Gaussian free field.

The general setup of (1) is classical. Since the vector field is incompressible, there are no sources or sinks and therefore we expect diffusion to be enhanced by the advection. If the vector field ![]() has correlations which have sufficiently fast decay, the process

has correlations which have sufficiently fast decay, the process ![]() behaves diffusively for large times. Indeed, one can prove a scaling limit to a Brownian motion with covariance matrix

behaves diffusively for large times. Indeed, one can prove a scaling limit to a Brownian motion with covariance matrix ![]() , for some effective diffusion matrix

, for some effective diffusion matrix ![]() which is larger than

which is larger than ![]() . What “sufficiently fast” means in this context, is that there exists

. What “sufficiently fast” means in this context, is that there exists ![]() such that

such that

(2) ![]()

If the covariances of the vector field satisfy (2) for ![]() , one expects to see very different, superdiffusive behavior. Here the long wavelengths of the vector field continue to cause enhancements of the diffusivity at every length scale, and if the amplitudes of these waves are large enough then they can cause the diffusivity to diverge as a function of the length scale. This case is much less well understood mathematically, due to the difficulty in analyzing the interaction of an infinite number of length scales.

, one expects to see very different, superdiffusive behavior. Here the long wavelengths of the vector field continue to cause enhancements of the diffusivity at every length scale, and if the amplitudes of these waves are large enough then they can cause the diffusivity to diverge as a function of the length scale. This case is much less well understood mathematically, due to the difficulty in analyzing the interaction of an infinite number of length scales.

This problem has been studied in the physics literature, where one can find heuristic predictions based on renormalization group arguments.

Our paper concerns the borderline case in which (2) is valid for ![]() . In this regime, the physicists predicted (in the 1980s) a logarithmic-type superdiffusivity:

. In this regime, the physicists predicted (in the 1980s) a logarithmic-type superdiffusivity:

(3) ![]()

where ![]() is the expectation with respect to the process starting from the origin (but not with respect to the vector field). This problem has attracted the recent attention of mathematicians (see here, here and here). These results have established (3), in the

is the expectation with respect to the process starting from the origin (but not with respect to the vector field). This problem has attracted the recent attention of mathematicians (see here, here and here). These results have established (3), in the ![]() model example described above, up to a

model example described above, up to a ![]() -dependent prefactor and in an annealed sense—that is, after averaging simultaneously with respect to the process and the vector field

-dependent prefactor and in an annealed sense—that is, after averaging simultaneously with respect to the process and the vector field ![]() .

.

Our main result: a quenched superdiffusive central limit theorem

The main result of our paper says that, almost surely with respect to the vector field, the process ![]() has a scaling limit to Brownian motion with a precise, superdiffusive rate.

has a scaling limit to Brownian motion with a precise, superdiffusive rate.

Theorem (A. & Bou-Rabee & Kuusi 2024) There exists a constant ![]() (which in the special case of the grad-perp of the

(which in the special case of the grad-perp of the ![]() GFF is equal to

GFF is equal to ![]() ) such that

) such that

(4) ![]()

where ![]() is a standard Brownian motion on

is a standard Brownian motion on ![]() . Moreover, for every

. Moreover, for every ![]() and

and ![]() , there exists

, there exists ![]() such that

such that

(5) ![]()

Besides the scaling limit, this result:

- Identifies the leading-order constant in the superdiffusivity, which in particular does not depend on

- Goes nearly to next-order and suggests that the next term in the asymptotic expansion of

![Rendered by QuickLaTeX.com \mathbf{E}^0[ |X_t|^2 ] \bigr]](https://www.scottnarmstrong.com/wp-content/ql-cache/quicklatex.com-99d308145fe614a61f4d31a28cc8310e_l3.png) should be of size

should be of size

- Extends the previous rigorous results from

to all dimensions

to all dimensions  . In higher dimensions

. In higher dimensions  , the typical case covered by the assumptions is when

, the typical case covered by the assumptions is when  has a matrix potential with entries given by independent copies of the

has a matrix potential with entries given by independent copies of the  -dimensional log-correlated Gaussian field.

-dimensional log-correlated Gaussian field.

This result is also the first quenched one since, in contrast to the annealed results mentioned above, it proves superdiffusivity for almost every sample of the vector field. The jump from annealed estimates to quenched estimates for this problem is not a small one. Annealed estimates are often easier to obtain because one can rely on exact formulas and Gaussian identities which are available only after taking the expectation with respect to the law of the vector field. Quenched estimates, on the other hand, require completely different arguments, and I think it is fair to say that this kind of result was not completely expected, even by experts.

Heuristic derivation of the square root of log-superdiffusivity

This square root of log-superdiffusivity can be derived heuristically by thinking about the diffusivity as a function of the scale and observing how the diffusivity enhancements at each scale will give rise to a recurrence relation for these renormalized diffusivities. If ![]() is the renormalized diffusivity at scale

is the renormalized diffusivity at scale ![]() , then this recurrence relation is roughly

, then this recurrence relation is roughly

(6) ![]()

The reason that ![]() appears in the denominator of the second term is that, as the effective diffusivity grows (as a function of the scale), the relative size of the vector field’s oscillations at these scales is smaller; consequently the enhancement due to advection is smaller.

appears in the denominator of the second term is that, as the effective diffusivity grows (as a function of the scale), the relative size of the vector field’s oscillations at these scales is smaller; consequently the enhancement due to advection is smaller.

A simple analysis of the recursion above yields that

![]()

See Section 1.3 of our paper for a longer heuristic explanation.

The core of the proof: iterative quantitative homogenization

We start by observing that statements about the process ![]() can be rephrased into statements about its infinitesimal generator, which is the elliptic operator

can be rephrased into statements about its infinitesimal generator, which is the elliptic operator

![]()

We can rewrite this as a divergence-form operator

![]()

where ![]() is the stream matrix for

is the stream matrix for ![]() , the anti-symmetric matrix (defined up to an additive constant) whose row divergence is equal to

, the anti-symmetric matrix (defined up to an additive constant) whose row divergence is equal to ![]() .

.

The invariance principle is then equivalent to a statement concerning homogenization for this elliptic operator.

However, this is outside the purview of classical homogenization theory as the elliptic operator we want to analyze is unbounded. Here I do not mean simply that the coefficient matrix ![]() does not belong to

does not belong to ![]() . Far worse, its

. Far worse, its ![]() oscillation in a ball

oscillation in a ball ![]() scales like

scales like ![]() , which obviously diverges as

, which obviously diverges as ![]() . Therefore, we have a large ellipticity contrast homogenization problem, with the ellipticity contrast actually getting worse as a function of the scale. The need for quantitative homogenization estimates is apparent, as one needs to homogenize the scales before the growing elliptic contrast can hurt you.

. Therefore, we have a large ellipticity contrast homogenization problem, with the ellipticity contrast actually getting worse as a function of the scale. The need for quantitative homogenization estimates is apparent, as one needs to homogenize the scales before the growing elliptic contrast can hurt you.

Moreover, unlike classical homogenization problems, here we need to iterate quantitative homogenization estimates an infinite number of times. This is intrinsic to the problem, since there are an infinite number of active scales responsible for the divergence of the renormalized diffusivities and thus the superdiffusivity.

In short, we need to use homogenization methods to formalize the renormalization group arguments of the physicists, and this requires new ideas and methods.

This strategy is very similar to a paper we wrote last year with Vlad Vicol. There we also used iterated, quantitative homogenization to prove anomalous diffusion for an advection-diffusion equation. The difference here is that our vector field is random and not built ad hoc from periodic ingredients. We are therefore faced with an (iterated) stochastic homogenization problem rather than a periodic one, and our active scales have no scale separation. The trade-off is that the superdiffusivity we prove here is less fast.

A detailed overview of our proof strategy appears in Section 1.4 of the paper. It is based on the “renormalization and coarse-graining” approach to quantitative homogenization we have developed in recent years, and it relies on new quantitative homogenization results for high ellipticity contrast problems (which appear in a new preprint, joint with Tuomo Kuusi, which will be posted to the arXiv very soon).

Beyond the setting of this particular problem, we hope the present paper serves as a “proof of concept” that (infinitely) iterative quantitative homogenization can be used to formalize renormalization group arguments.

I didn’t realize that the large ellipticity contrast is actually related to the correlation property of the coefficient — originally i thought it’s just that the coefficient can be close to zero and infinity. Is this stressed somewhere in the paper I can read? Thanks!

I’m sorry for the late reply Yu– I was not monitoring comments and didn’t see yours until now.

In this case, the anti-symmetric part of the coefficient field is the random field, and as you know its critical (logarithmic) correlations means that its oscillation in a ball

oscillation in a ball  will be of order

will be of order  . In

. In  it would be

it would be  . Since constant anti-symmetric matrices are not seen by the equation, what really matters in any finite domain is this oscillation. Thus, it grows like

. Since constant anti-symmetric matrices are not seen by the equation, what really matters in any finite domain is this oscillation. Thus, it grows like  . But the size of the anti-symmetric matrix is related to the ellipticity. In fact, if your field is

. But the size of the anti-symmetric matrix is related to the ellipticity. In fact, if your field is  , like we have here, then the ellipticity is actually the square of the

, like we have here, then the ellipticity is actually the square of the  oscillation of

oscillation of  divided by

divided by  . So for this problem, ellipticity grows like the log of the length scale. This is why you are in a “race” between homogenization (making the effective ellipticity smaller) and the growing disorder (making it the ellipticity larger).

. So for this problem, ellipticity grows like the log of the length scale. This is why you are in a “race” between homogenization (making the effective ellipticity smaller) and the growing disorder (making it the ellipticity larger).